Human-in-the-Loop Automation: Where to Stop and Why

Learn where human-in-the-loop automation should stop, how to set risk thresholds, and design AI workflows that scale without breaking real business operations.

AI & AUTOMATION IN BUSINESS

2/10/2026

Most automation failures don’t come from bad tools.

They come from trying to automate decisions that shouldn’t be automated yet.

Small businesses don’t collapse because they didn’t automate enough.

They collapse because they automated past the point of safety—and didn’t know where the human should step back in.

This post is about defining control points and risk thresholds so your automation saves time without quietly creating expensive mistakes.

The Core Principle: Automate Execution, Not Judgment

Here’s the rule I use in real businesses:

If a mistake costs more than the time saved, a human stays in the loop.

Automation is phenomenal at:

Repetition

Routing

Formatting

Validation

Drafting

It’s dangerous at:

Ambiguous judgment

Edge-case interpretation

High-impact decisions with incomplete context

Your job isn’t to automate everything.

It’s to decide where automation hands off to a human — and why.

The 3 Places Automation Breaks (If You Remove Humans)

1. When Inputs Are Ambiguous

Examples:

Customer messages

Free-form intake forms

Voice notes

CRM notes written by sales reps

LLMs can interpret these — but interpretation ≠ correctness.

If:

Two interpretations lead to different actions

The wrong action creates downstream work or risk

→ Human confirmation is required

2. When Outputs Affect Money, Reputation, or Trust

Examples:

Pricing changes

Refund approvals

Contract language

Public-facing responses

Automation can prepare these outputs.

It should not finalize them without review.

3. When Errors Compound Quietly

The most dangerous failures don’t alert anyone.

Examples:

Misclassified leads routed for weeks

Incorrect tags poisoning analytics

AI-written summaries feeding other automations

If an error can persist undetected, you need a human checkpoint.

The Control Point Framework (How to Design Human-in-the-Loop Systems)

Every automation should answer three questions:

1. What Decision Is Being Made Here?

Be explicit.

Bad:

“The AI decides the lead quality.”

Good:

“The AI assigns a confidence score and recommendation.”

2. What Is the Cost of Being Wrong?

Quantify it.

Cost TypeExampleFinancialLost deal, refund issued incorrectlyTimeRework, customer support escalationsReputationalWrong message sent to the wrong personSystemicBad data propagating to other workflows

If the cost > time saved → add a human checkpoint.

3. What’s the Risk Threshold for Automation?

This is where most people fail.

Instead of “AI decides yes/no,” use confidence-based routing.

Example:

Confidence ≥ 85% → auto-approve

Confidence 60–84% → human review

Confidence < 60% → manual handling

This turns automation into a filter, not a dictator.

Real-World Example: AI Lead Qualification (Done Right)

Goal: Reduce manual lead review time by 70%

Bad Version (Common Failure)

AI scores leads

Auto-tags as “Qualified / Unqualified”

Routes directly to sales or nurture

Failure mode:

Sales wastes time on junk leads, or good leads get buried.

HighWay Robot Version (Survives Reality)

Step 1: AI Pre-Processing

Extracts firmographics

Identifies intent signals

Generates:

Lead summary

Qualification score

Reasoning

Step 2: Risk-Based Routing

High confidence → auto-routed to sales

Medium confidence → Slack/CRM review queue

Low confidence → nurture track

Step 3: Human Feedback Loop

Sales can override AI decision

Overrides are logged

Monthly review improves prompts + thresholds

Result:

Time saved without blind trust

System improves instead of drifting

Where Humans Must Always Stay In (Non-Negotiables)

No matter how good the model gets, keep humans involved in:

Final approvals for money movement

Public-facing brand voice

Legal or compliance-sensitive actions

Strategy-level decisions

Anything you’d hate to explain to a customer later

Automation should make humans faster, not absent.

Designing the Handoff (This Is the Part Most Miss)

A human-in-the-loop system fails if the human step is:

Vague

Slow

Burdensome

Design the handoff deliberately.

Good Handoff Design:

Clear yes/no or edit choices

Context included (why the AI decided this)

One-click approve / revise

Feedback captured automatically

Bad Handoff Design:

“Review this” with no guidance

No explanation of AI reasoning

Manual copy-paste edits

Feedback goes nowhere

If the human hates the handoff, they’ll bypass the system — and your automation dies.

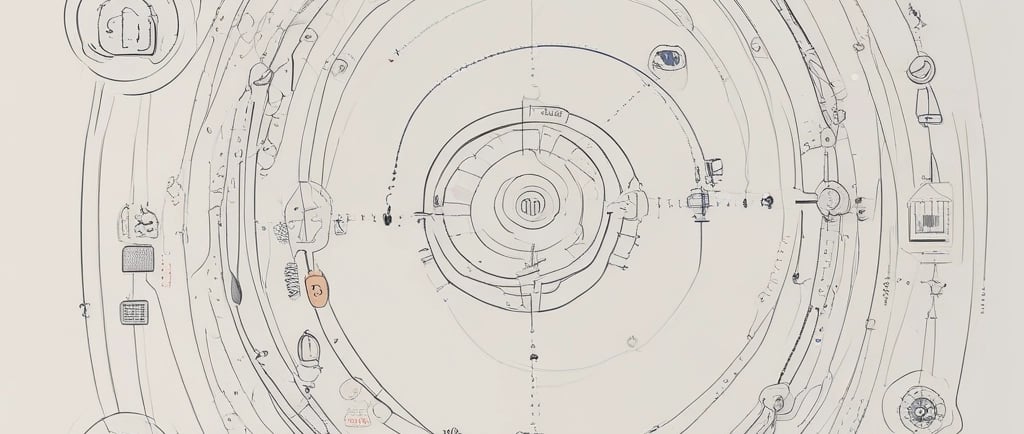

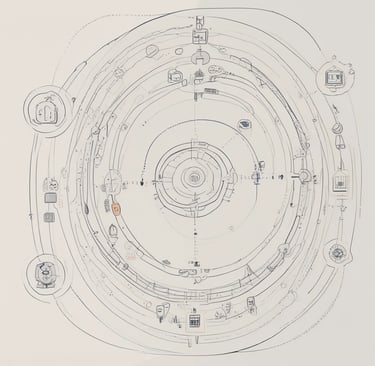

The Automation Maturity Curve (Know Where You Are)

Stage 1: Manual with AI Assistance

AI drafts, humans decide

Stage 2: AI with Human Approval

AI acts, humans approve exceptions

Stage 3: Confidence-Based Autonomy

AI handles low-risk decisions

Humans handle edge cases

Stage 4: Continuous Improvement Loop

Human feedback trains better routing

Fewer reviews over time

Trying to jump from Stage 1 → Stage 4 is how systems break.

The Real Win: Fewer Decisions, Not Fewer People

Human-in-the-loop automation isn’t about distrust.

It’s about placing humans where judgment matters most and letting machines handle everything else.

If your automation removes thinking, it will fail.

If it removes friction, it will scale.

That’s the difference.

In fact, industry practitioners emphasize that human-in-the-loop systems are essential to balance AI efficiency with human judgment, ensuring accuracy, accountability, and long-term trust across use cases. See how leading AI practitioners define and implement this approach in detail here.

Human-in-the-Loop AI – Keeping Humans at the Heart of Automation - Ignatiuz AI Center of Excellence.

Want the exact prompts behind these systems?

The HighWay Robot Ultimate Prompt Pack Bundle includes 160 production-tested prompts across four packs — designed specifically for human-in-the-loop workflows, risk thresholds, and AI decision transparency.

These aren’t “automation hacks.” They’re control-layer prompts that help you decide where AI should stop and when humans should step in.

If you’re building real workflows (not demos), this saves weeks of trial and error.

Next in Your Operations Playbook

Connect with Highway Robot

For support, product questions, or strategic collaborations.

subscribe

📧support@highwayrobot.com

© 2026. All rights reserved.